Part 1 made the argument: serious artificial intelligence (AI) work needs a routing system for intelligence.

This part explains the protocol.

More agents only help when the work has boundaries. When those boundaries are missing, they duplicate effort and make conflicting assumptions. They can also overstate weak evidence or spread decision-making across too many places.

The protocol prevents that drift.

It keeps the orchestrator accountable, separates roles, chooses the smallest useful workflow, and adds guardrails when they improve trust.

The Two Front Doors

My implementation of the protocol is called Pathway 1.

The supporting implementation notes and protocol artifacts live in the Pathway Protocol GitHub repo.

It has two short triggers:

Think pathBuild path

Think path is for research, synthesis, decision briefs, and executive memos. It also covers requirements discovery, meeting analysis, and recommendations.

Build path is for coding, debugging, refactoring, and tests. It also covers codebase mapping, technical implementation, and local proof-of-concept work.

The phrases are short by design. I do not want to paste a large operating manual every time I start a task. The phrase activates the protocol.

The phrase is the front door.

Behind it is the system: an OpenAI GPT/Codex orchestrator, role-specific subagents, safety gates, and review passes. It also includes model lanes and a record of what happened.

The orchestrator makes the routing decision. It reads the prompt, identifies the task type, estimates risk, and checks source clarity. Then it decides whether Safe Lane is needed and chooses the model lane and reasoning level for each subtask.

That choice affects economics. A larger model, stronger reasoning level, longer context window, or repeated review pass changes the cost profile of the run. The protocol makes those choices explicit instead of letting every subtask inherit the most expensive default.

That routing is dynamic. A request can start as a simple Think path summary and become a higher-reasoning source-fidelity task if the sources conflict. A Build path task can start as a narrow edit and become a security or regression review if the code touches auth, data handling, or shared interfaces.

Think Path

Think path is the knowledge-work lane.

A simple Think path task might need only a writer and a reviewer. Summarize these notes. Turn this source material into a clean update. Extract the key decisions.

A harder Think path task needs more structure. If sources conflict, the system preserves the conflict. If the output will drive a decision, it gets stronger review. If the source scope spans repos, folders, or a large document set, exploration happens before analysis.

The review dimensions are:

- accuracy

- completeness

- source fidelity

- decision quality

The protocol helps identify when a memo becomes an operating decision.

Build Path

Build path is the technical-work lane.

A small Build path task might be a focused bug fix with a targeted test. A larger task might touch shared interfaces, auth, deployment behavior, or release readiness. It might also connect docs and code.

The review dimensions are:

- correctness

- security

- completeness

- regression

The system scales with risk. A tiny test fix needs a targeted path. A release-facing auth change should be explored, implemented, validated, and reviewed through the right lenses.

This is where token economics become practical. The goal is to spend strong review where it matters.

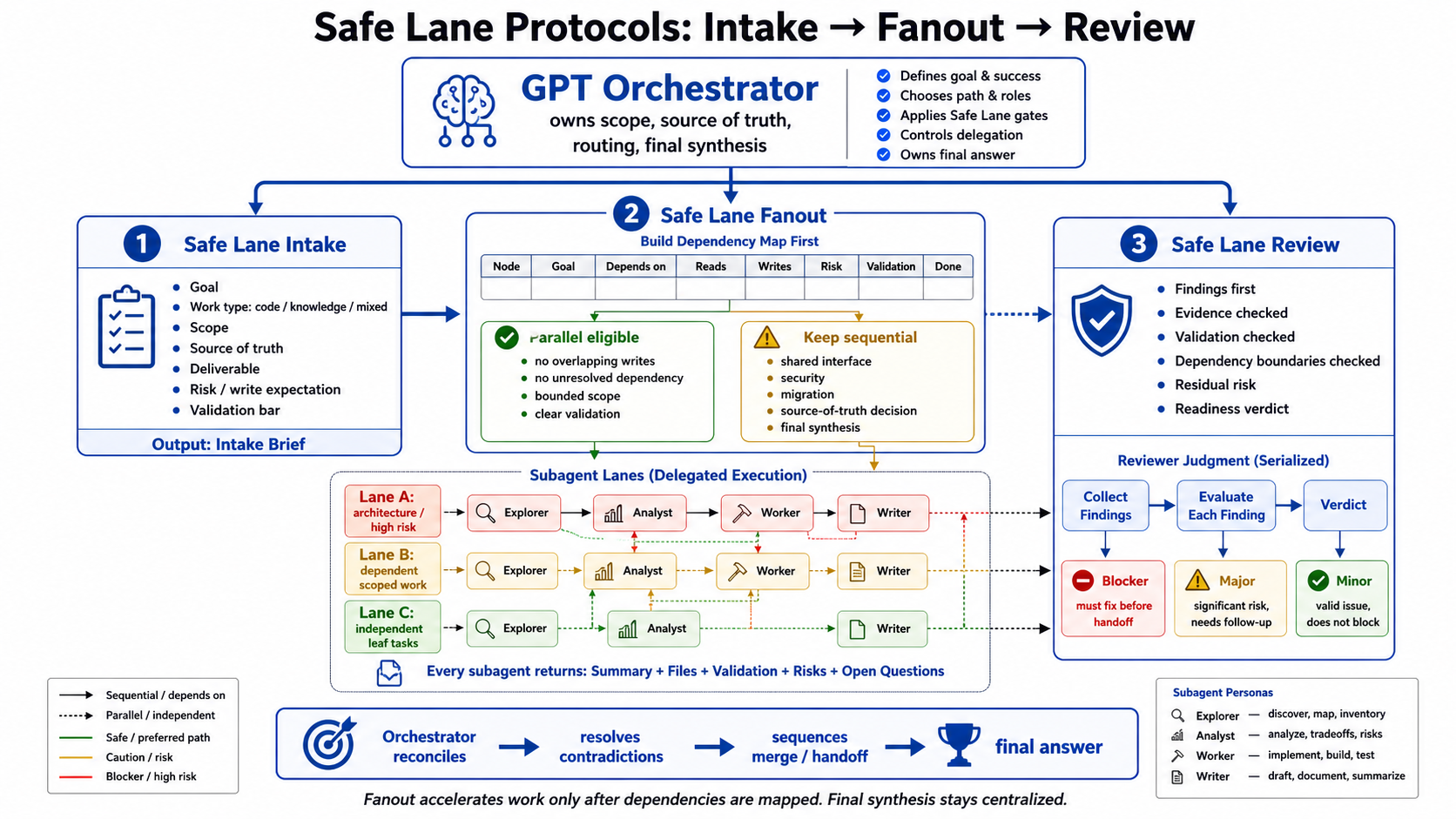

Safe Lane

Safe Lane is the guardrail layer.

It has three parts:

- Intake

- Fanout

- Review

Safe Lane Intake is used when the request has unclear scope or source of truth. It also helps when the deliverable, work type, or validation bar is unclear. It turns an ambiguous ask into a bounded brief before work begins.

Safe Lane Fanout is used when the work is large enough to split. It starts only after a dependency map exists. Each node needs clear reads, writes, and risk. It also needs validation and a done condition. Work stays sequential when two agents would write to the same surface or when the dependency is unclear.

Safe Lane Review is the findings-first trust gate. It asks whether the output is ready to rely on. For code, that means correctness and regressions. It also means security and validation. For knowledge work, that means source fidelity and missing coverage. It also checks whether the conclusion is stronger than the evidence supports.

Safe Lane keeps multi-agent work from turning into chaos.

Parallelism is useful only when the dependencies are real.

The Dependency Map

Fanout starts with a map.

For each node, the orchestrator needs to know:

- goal

- dependencies

- reads

- writes

- risk

- validation

- done condition

Only then can the system decide whether a node can run in parallel.

A node is eligible for parallel work when it has bounded scope, clear validation, and a reviewable artifact to return. It also needs clean dependencies and no overlapping writes.

If any of that is missing, the work stays sequential.

That is the difference between multi-agent orchestration and agent sprawl.

Handoff Rules

Every delegated task needs a clear contract:

- exact goal

- bounded scope

- source of truth

- reads

- writes

- expected artifact

- validation command or reviewable proof

- done condition

Every subagent returns:

- summary

- files or sources touched

- validation

- risks

- open questions

The orchestrator reconciles the work, resolves contradictions, decides sequencing, and owns the final answer.

Subagents do not synthesize the whole initiative independently.

Local Qwen As A Helper Lane

Local Qwen fits into this system as a helper lane.

The public abstraction:

- it is a local open-weight helper model

- it runs behind a local endpoint

- it is used only for bounded first-pass work

- it can help as explorer, analyst, or first-pass writer

- it cannot be orchestrator, worker, reviewer, or final authority

- GPT/Codex reviews every handoff

Local models can reduce the burden of first-pass work while keeping judgment in the main system.

Qwen can help map a document set, extract open questions, draft tradeoffs, or outline implementation slices. Decision work, release judgment, security conclusions, and final user-facing answers return to GPT/Codex.

The role boundary is explicit:

| Role | Qwen allowed? | Why |

|---|---|---|

| Explorer | Yes | Good fit for source maps, file maps, architecture sketches, and document inventory |

| Analyst | Yes | Good fit for first-pass tradeoffs, risks, criteria, and implementation slices |

| Writer | Limited | Useful for first-pass outlines and drafts from supplied evidence |

| Orchestrator | No | Scope, source of truth, routing, and final synthesis stay with GPT/Codex |

| Worker | No | File edits, implementation, git actions, and system changes stay with GPT/Codex |

| Reviewer | No | Correctness, security, source fidelity, and readiness verdicts stay with GPT/Codex |

The Pathway split is also explicit:

| Path | Qwen can help with | GPT/Codex keeps |

|---|---|---|

| Think path | Source inventory, source maps, open-question extraction, tradeoff drafts, first-pass outlines, and first-pass briefs | Final analysis, source-fidelity review, decision quality, and user-facing synthesis |

| Build path | File mapping, architecture summaries, likely entry points, validation criteria, risks, and stop conditions | Implementation, tests, security review, regression review, final acceptance, and final report |

My current local helper lane uses this shape:

| Setting | Current posture |

|---|---|

| Runtime | Local Apple MLX-backed endpoint |

| Model lane | Local Qwen helper |

| Default state | Off by default; enabled intentionally for bounded work |

| Allowed roles | Explorer, analyst, first-pass writer |

| Blocked roles | Orchestrator, worker, reviewer, specialized reviewers, final authority |

| Prompt posture | Evidence-bound, conservative, source-grounded |

| Temperature | Low, currently 0.1 |

| Top P | Currently 0.7 |

| Max output | Currently 2200 tokens |

| Context budget | Currently 20000 tokens |

Every substantive handoff should be classified:

| Classification | Meaning |

|---|---|

| Accepted | Accurate and useful with no meaningful correction |

| Revised | Useful, but incomplete, source-limited, or needing correction |

| Rejected | Inaccurate, unsupported, off-scope, unsafe, or not useful enough to rely on |

That classification matters. It keeps local model use from becoming hidden trust.

Evals And Readiness

The protocol also needs validation.

For Pathway, that means checking:

- the routing decision

- the selected persona flow

- Safe Lane gates

- Qwen eligibility

- artifacts created

- validation run

- review completed

- residual risks

For local Qwen, that means checking:

- whether the local server is ready

- whether delegation is on

- whether only allowed roles are used

- whether the handoff has enough source grounding

- whether GPT accepted, revised, or rejected the result

The eval loop:

| Step | Purpose |

|---|---|

| Delegate | Send a bounded helper task to Qwen only when the role is eligible |

| Measure | Record tokens, elapsed time, role, path, task, and runtime behavior |

| Review | Have GPT/Codex check the handoff against the evidence |

| Classify | Mark the handoff as accepted, revised, or rejected |

| Improve | Adjust routing, prompts, schemas, or context packaging when failures repeat |

| Re-test | Run the same validation path again before trusting the change |

That loop is a key feature of the local model lane.

The local model is treated as a lane that can improve through measured use. When Qwen misses a source fact, overstates a claim, or ignores a constraint, the failure becomes input to the next route. A weak handoff does the same. It can also shape the next prompt, schema, or context bundle.

The self-improvement loop looks like this:

| Signal | Adjustment |

|---|---|

| Missed source facts | Improve source packaging or require tighter source tagging |

| Unsupported assumptions | Move uncertainty into do_not_treat_as_decided and tighten the prompt |

| Weak role fit | Route less work to Qwen or remove that role from the helper chain |

| Useful but incomplete handoff | Keep the lane, but revise the role contract or schema |

| Rejected handoff | Stop using that pattern until the eval passes again |

| Token share too high | Use a smaller Qwen role chain and return more synthesis to GPT/Codex |

| Token share too low | Give Qwen more first-pass inventory or extraction work when quality supports it |

This keeps the local lane honest. The system evaluates handoffs, measures usefulness, records failure patterns, and changes the protocol only after those changes pass the same validation path.

For evidence-heavy Think path work, the stricter schema is:

| Required field | Purpose |

|---|---|

source_facts |

Facts that come directly from supplied evidence |

explicit_decisions |

Decisions stated in the source material |

open_questions |

Unknowns that should not be treated as settled |

risks |

Material concerns or failure modes |

recommendations |

Suggested next steps based on the evidence |

do_not_treat_as_decided |

Plausible but unsupported claims that must stay out of the final answer |

The target for eligible Think path delegation is useful first-pass work that keeps final quality intact:

| Metric | Target |

|---|---|

| Qwen token share | Roughly 60-70 percent of eligible Think path work |

| Handoff quality | Accepted or revised-useful |

| Final answer quality | No unsupported person, date, ownership, commitment, decision, or source claim survives GPT review |

Evals should prove that the protocol does what it claims.

The Repeatable Pattern

The pattern is:

- Start with the work type.

- Route it through Think path or Build path.

- Decide the risk and validation bar.

- Choose the smallest useful persona flow.

- Use Safe Lane when scope, dependency, or trust requires it.

- Use local models only for bounded helper work.

- Keep final judgment centralized.

- Record enough evidence that the work can be reviewed.

That is the system I wanted: a practical orchestration layer for spending intelligence where it matters.

Closing

Serious AI building is heading toward orchestration.

The most valuable builders will know how to divide work, route judgment, preserve evidence, and use different levels of intelligence while keeping accountability intact.

The strongest model stays responsible. Smaller agents do bounded work. Local models help where they can. Every important answer comes back through review.

Token cost was the pressure.

Orchestration became the answer.