I started building a multi-agent orchestration protocol because token cost became an economic constraint.

Token cost shows up in concrete ways: rate limits, token caps, included usage running out, and higher subscription tiers. It also shows up when every part of a workflow uses a frontier model.

The expensive pattern is asking one strong model to carry every part of the work at one level of intelligence and reasoning.

The cost is not just the sticker price of one prompt. Larger and more capable models usually cost more per token and take more compute to run. Higher reasoning levels can also spend more tokens on intermediate thinking, review, and retries. Total cost becomes the price per token multiplied by the amount of context, output, reasoning, and repeated work the system creates.

My read of the industry is that compute will stay constrained for a while. Per-token prices may fall in some places, but agentic work pushes total usage in the other direction. More context, inference, review, and test-time reasoning all add demand. The same builder constraint now includes data centers, graphics processing units (GPUs), and high-speed memory. It also includes power and local hardware.

Agents create a way out of that pattern. They let us stop treating work as one large prompt and start treating it as a set of roles.

For a solo builder, the question becomes:

How do I keep using the best intelligence available while reserving the most expensive model and highest reasoning level for the tasks that need them?

That question became the starting point for the system.

The Principle

The principle is:

Use the most capable intelligence available, but only where the task actually needs it.

I still want frontier models. I want the strongest reasoning model available for hard synthesis, final judgment, risky coding decisions, and security review. I want it anywhere being wrong costs more than the tokens.

Many parts of a workflow need care before they need final-authority intelligence. Source mapping, open-question extraction, and rough outlines can run in a different lane. Likely-file identification and first-pass comparisons can too. They still need to come back for review.

Once you see artificial intelligence (AI) work as a sequence of roles instead of one model response, you can start routing the work.

From Prompting To Orchestration

The shift for me was moving from “ask the model to do the thing” to “route the work through a controlled system.”

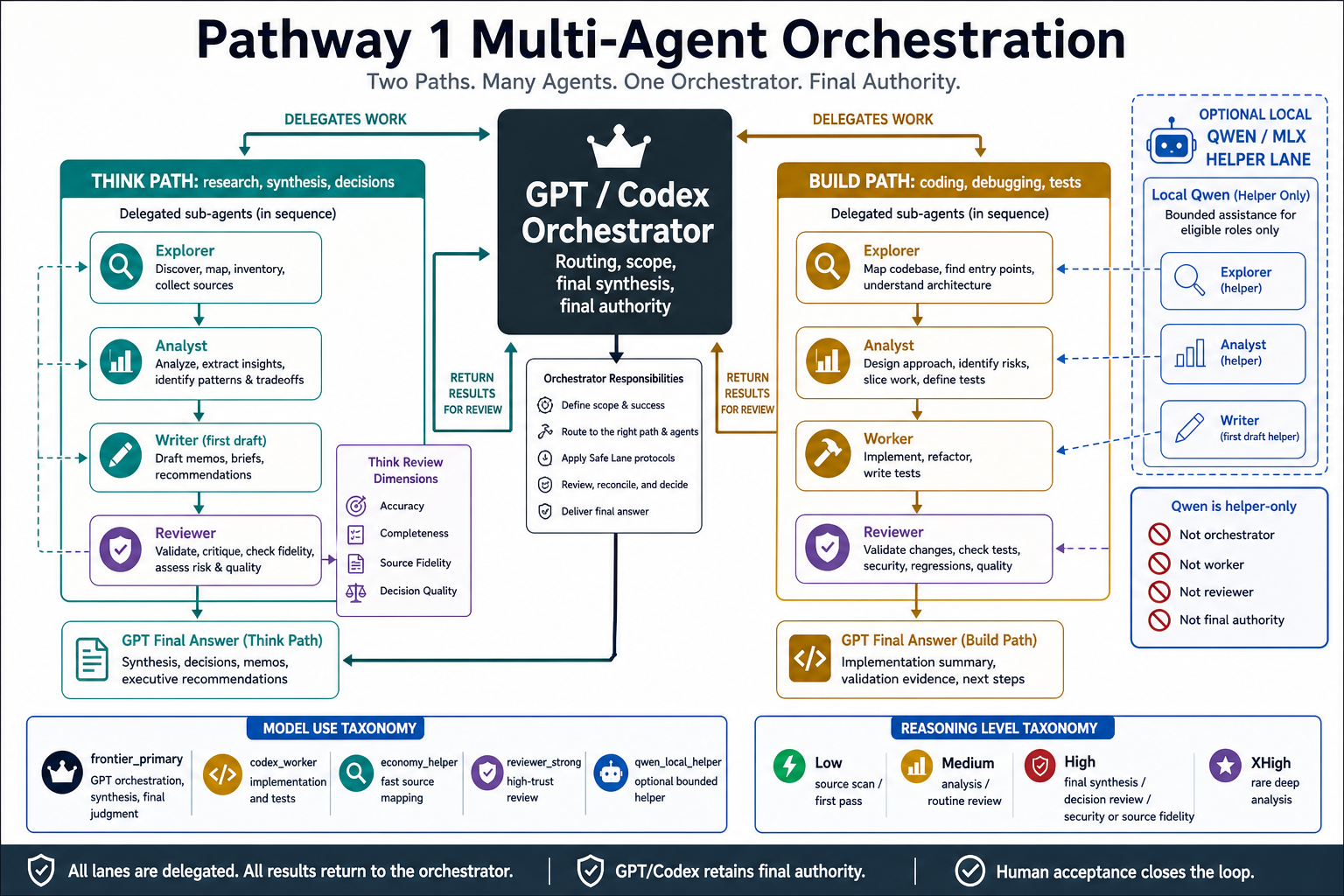

At the high level, the system needs four parts:

- an orchestrator that owns scope, routing, final synthesis, and final authority

- role specialists that handle bounded subtasks

- model lanes that match task difficulty and cost

- review gates that decide when output is ready to trust

That is the architecture.

The supporting implementation notes and protocol artifacts live in the Pathway Protocol GitHub repo.

The orchestrator agent owns the run. It breaks the request into smaller jobs and sends those jobs to helper agents. Then it pulls their results back into one answer. An explorer agent might map files or sources. An analyst agent might identify tradeoffs. A writer might draft. A worker might build or test. A reviewer might check the result.

The orchestrator also decides which model lane and reasoning level each part of the work deserves. It evaluates the prompt, task type, and risk. It also checks source clarity, validation bar, and final use of the output. A low-risk source map needs a smaller model and reasoning budget than a security review, final recommendation, or release decision.

The Tuned Team

The tuned team is intentionally small.

The core roles are:

orchestrator: routes the work and owns final synthesisexplorer: maps files, sources, documents, entry points, and unknownsanalyst: turns evidence into options, risks, tradeoffs, criteria, and implementation slicesworker: makes bounded technical changes after scope is clearwriter: drafts human-facing artifacts from evidencereviewer: checks readiness before trust

Some work needs specialized review:

- security reviewer

- regression reviewer

- source fidelity reviewer

- decision reviewer

The value is separation.

Local Models Fit, But Carefully

Local models add another lane.

They are improving quickly enough to matter for real work. For a solo builder, the constraint shifts back to hardware. That means memory, GPU access, model size, and runtime reliability. It also takes patience to keep the lane working.

I treat local models as helper lanes.

In my setup, local Qwen runs as a local open-weight helper. It can help with bounded first-pass roles:

- explorer

- analyst

- first-pass writer

Final authority stays out of the local model lane:

- orchestrator

- worker

- reviewer

- security review

- final decisions

- final user-facing conclusions

That boundary makes the local model useful. Qwen can map sources, draft tradeoffs, outline options, and summarize supplied evidence. OpenAI GPT/Codex reviews the handoff. Then it classifies the result as accepted, revised, or rejected.

Model Use Becomes A Taxonomy

The taxonomy makes routing concrete.

Instead of asking which model should do everything, the architecture asks:

- What kind of subtask is this?

- How much reasoning does it need?

- What is the risk of being wrong?

- Does the output need final review?

- Is this safe for a local helper model?

The orchestrator asks those questions dynamically for each task. It evaluates the actual prompt and routes each subtask to enough intelligence, enough reasoning, and enough review for that moment.

Those questions lead to model lanes:

| Lane | Used for | Not used for | Review requirement |

|---|---|---|---|

| Frontier primary | Orchestration, hard synthesis, complex coding decisions, and final judgment | Routine source scanning or low-risk first drafts | Owns or performs final review |

| Coding worker | Implementation, tests, debugging, and refactoring | Final release judgment or broad strategy decisions | Reviewed before trust |

| Strong reviewer | Correctness, security, regression, source fidelity, and readiness checks | First-pass drafting or bulk exploration | Findings are reconciled by the orchestrator |

| Economy helper | Low-risk exploration, source mapping, and quick comparison work | High-stakes conclusions or final authority | Spot-checked or reviewed based on risk |

| Local helper model | Bounded first-pass source mapping, tradeoffs, outlines, and summaries from supplied evidence | Orchestration, implementation, review, security decisions, or final user-facing conclusions | GPT/Codex accepts, revises, or rejects the handoff |

They also lead to reasoning levels:

| Reasoning level | Best fit | Avoid using it for |

|---|---|---|

| Low | Quick routing, light source scanning, small summaries, and obvious classifications | Ambiguous decisions, security-sensitive work, or source conflict |

| Medium | Normal analysis, routine coding, implementation planning, and everyday synthesis | Final review of high-risk work or hard strategic judgment |

| High | Complex decisions, source fidelity, correctness review, risky implementation choices, and important recommendations | Bulk first-pass work where cheaper lanes are enough |

| Extra high | Rare deep analysis where uncertainty, stakes, or reversibility justify the cost | Default workflow steps or tasks that mainly need inventory |

This is the practical meaning of “just enough intelligence”: keep the strongest intelligence focused on the places where it changes the outcome.

What This Changes

The old mental model was:

Ask a smart model for an answer.

The new mental model is:

Build a routing system for work.

That shift changes the operator’s job.

The operator designs the flow of intelligence.

Multi-agent orchestration becomes a cost-control pattern, a quality-control pattern, and a judgment-preservation pattern at the same time.

Bridge To Part 2

This is the argument for the architecture.

A tuned team of agents is useful only when the work stays bounded, the handoffs stay reviewable, and final judgment stays centralized.

That is where the protocol matters.

Part 2 is about the mechanics: the two front doors, the role contracts, and the Safe Lane guardrails. It also covers the dependency map and the local model handoff rules. Those mechanics keep multi-agent work from becoming agent sprawl.